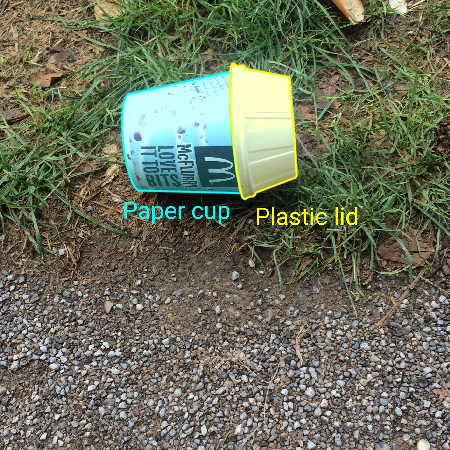

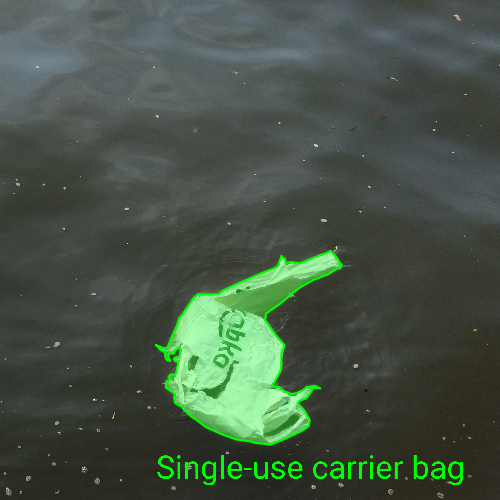

TACO is a growing image dataset of waste in the wild. It contains images of litter taken under diverse environments: woods, roads and beaches. These images are manually labeled and segmented according to a hierarchical taxonomy to train and evaluate object detection algorithms. Currently, images are hosted on Flickr and we have a server that is collecting more images and annotations @ tacodataset.org

For convenience, annotations are provided in COCO format. Check the metadata here: http://cocodataset.org/#format-data

TACO is still relatively small, but it is growing. Stay tuned!

For more details check our paper: https://arxiv.org/abs/2003.06975

If you use this dataset and API in a publication, please cite us using:

@article{taco2020,

title={TACO: Trash Annotations in Context for Litter Detection},

author={Pedro F Proença and Pedro Simões},

journal={arXiv preprint arXiv:2003.06975},

year={2020}

}

December 20, 2019 - Added more 785 images and 2642 litter segmentations.

November 20, 2019 - TACO is officially open for new annotations: http://tacodataset.org/annotate

To install the required python packages simply type

pip3 install -r requirements.txt

Additionaly, to use demo.pynb, you will also need coco python api. You can get this using

pip3 install git+https://github.com/philferriere/cocoapi.git#subdirectory=PythonAPI

To download the dataset images simply issue

python3 download.py

Our API contains a jupyter notebook demo.pynb to inspect the dataset and visualize annotations.

Unlabeled data

A list of URLs for both unlabeled and labeled images is now also provided in data/all_image_urls.csv.

Each image contains one URL for each original image (second column) and one URL for a VGA-resized version (first column)

for images hosted by Flickr. If you decide to annotate these images using other tools, please make them public and contact us so we can keep track.

Unofficial data

Annotations submitted via our website are added weekly to data/annotations_unofficial.json. These have not yet been been reviewed by us -- some may be inaccurate or have poor segmentations.

You can use the same command to download the respective images:

python3 download.py --dataset_path ./data/annotations_unofficial.json

The implementation of Mask R-CNN by Matterport is included in /detector

with a few modifications. Requirements are the same. Before using this, the dataset needs to be split. You can either donwload our weights and splits or generate these from scratch using the split_dataset.py script to generate

N random train, val, test subsets. For example, run this inside the directory detector:

python3 split_dataset.py --dataset_dir ../data

For further usage instructions, check detector/detector.py.

As you can see here, most of the original classes of TACO have very few annotations, therefore these must be either left out or merged together. Depending on the problem, detector/taco_config contains several class maps to target classes, which maintain the most dominant classes, e.g., Can, Bottles and Plastic bags. Feel free to make your own classes.

此处可能存在不合适展示的内容,页面不予展示。您可通过相关编辑功能自查并修改。

如您确认内容无涉及 不当用语 / 纯广告导流 / 暴力 / 低俗色情 / 侵权 / 盗版 / 虚假 / 无价值内容或违法国家有关法律法规的内容,可点击提交进行申诉,我们将尽快为您处理。