同步操作将从 babysor/MockingBird 强制同步,此操作会覆盖自 Fork 仓库以来所做的任何修改,且无法恢复!!!

确定后同步将在后台操作,完成时将刷新页面,请耐心等待。

This repository is forked from Real-Time-Voice-Cloning which only support English.

English | 中文

🌍 Chinese supported mandarin and tested with multiple datasets: aidatatang_200zh, magicdata

🤩 PyTorch worked for pytorch, tested in version of 1.9.0(latest in August 2021), with GPU Tesla T4 and GTX 2060

🌍 Windows + Linux tested in both Windows OS and linux OS after fixing nits

🤩 Easy & Awesome effect with only newly-trained synthesizer, by reusing the pretrained encoder/vocoder

Follow the original repo to test if you got all environment ready. **Python 3.7 or higher ** is needed to run the toolbox.

pip install -r requirements.txt to install the remaining necessary packages.Note that we are using the pretrained encoder/vocoder but synthesizer, since the original model is incompatible with the Chinese sympols. It means the demo_cli is not working at this moment.

Download aidatatang_200zh or SLR68 dataset and unzip: make sure you can access all .wav in train folder

Preprocess with the audios and the mel spectrograms:

python synthesizer_preprocess_audio.py <datasets_root>

Allow parameter --dataset {dataset} to support adatatang_200zh, magicdata

Preprocess the embeddings:

python synthesizer_preprocess_embeds.py <datasets_root>/SV2TTS/synthesizer

Train the synthesizer:

python synthesizer_train.py mandarin <datasets_root>/SV2TTS/synthesizer

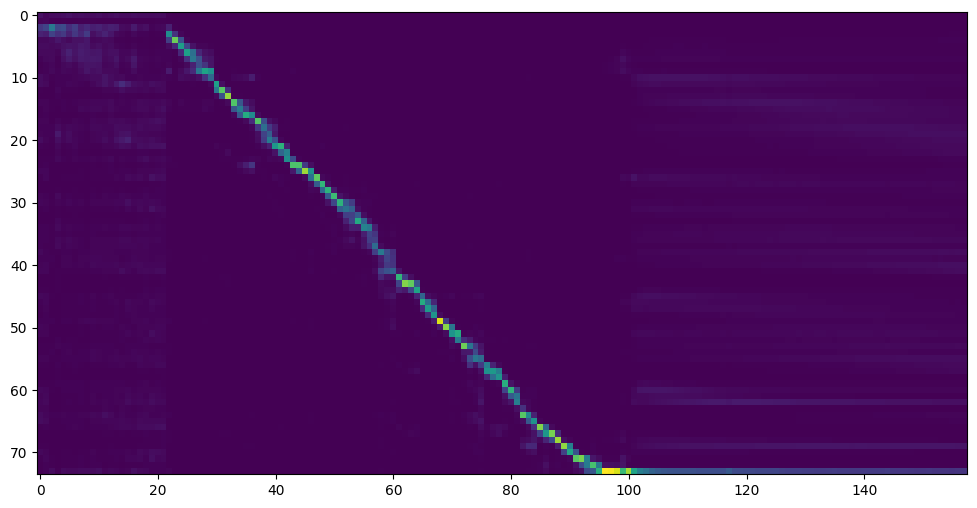

Go to next step when you see attention line show and loss meet your need in training folder synthesizer/saved_models/.

FYI, my attention came after 18k steps and loss became lower than 0.4 after 50k steps.

A link to my early trained model: Baidu Yun Code:aid4

You can then try the toolbox:

python demo_toolbox.py -d <datasets_root>

or

python demo_toolbox.py

Good news🤩: Chinese Characters are supported

此处可能存在不合适展示的内容,页面不予展示。您可通过相关编辑功能自查并修改。

如您确认内容无涉及 不当用语 / 纯广告导流 / 暴力 / 低俗色情 / 侵权 / 盗版 / 虚假 / 无价值内容或违法国家有关法律法规的内容,可点击提交进行申诉,我们将尽快为您处理。